A viral post on r/ClaudeAI got a lot of attention this month. The framing, near as I can tell from secondhand summaries, was simple: a nursing student reportedly used Claude Haiku to build a 660,000-page document database covering medical and pharmacology references. The community reaction split fast into two camps. The cheerleaders said look what one developer can build with AI now. The pile-on said take it down NOW, this is dangerous. Both reactions are partially right. Both miss the actual lesson.

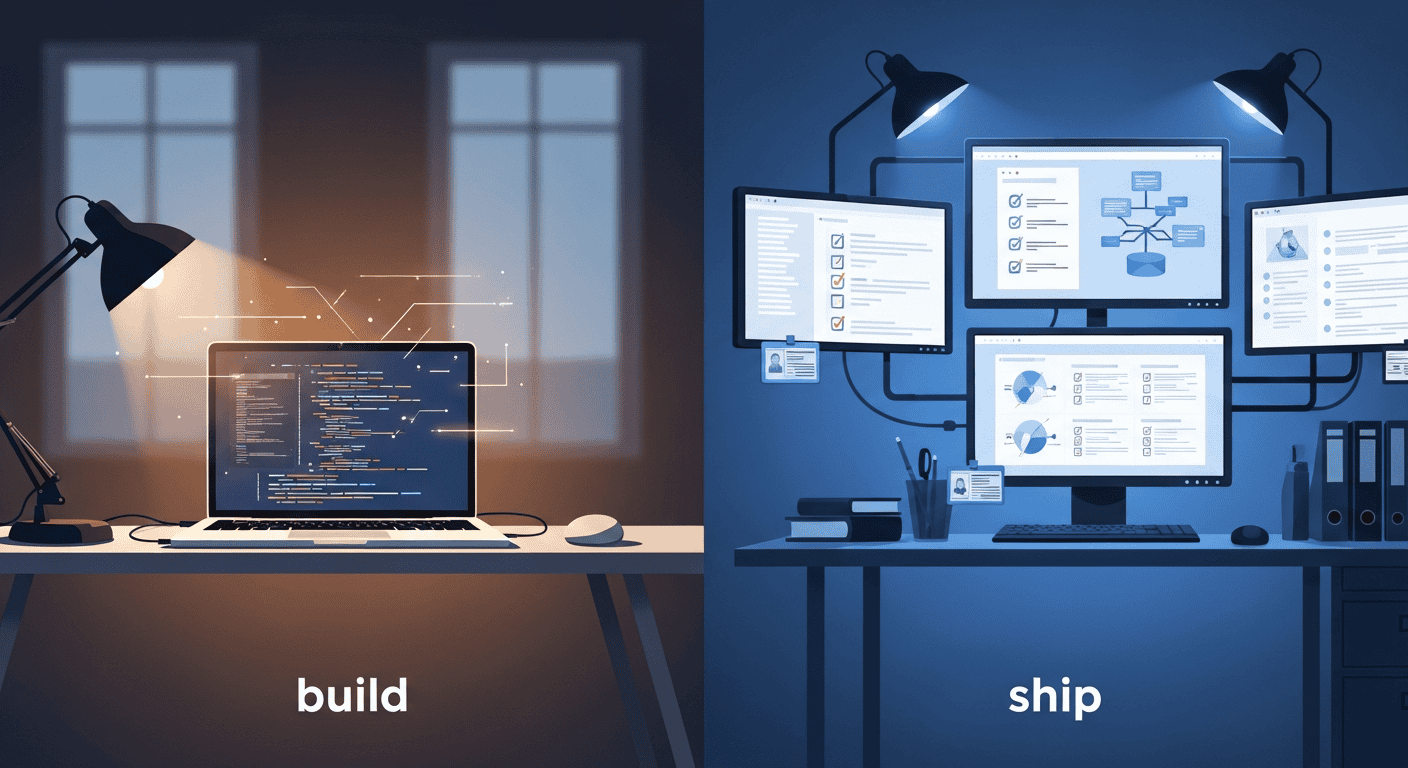

I haven’t read the thread directly. Reddit was firewalled when our research pass tried to fetch it, and I haven’t audited the project’s medical content myself. But the discourse around it has been everywhere this week, and the shape of the criticism is consistent enough across summaries to talk about. What I keep coming back to, watching it play out, is something almost nobody is naming: AI tools democratized the BUILD step. They did not change the bar for SHIPPING something other people will rely on. Those are two different responsibilities, and the gap between them is what trips up almost every “I made X with AI” project.

I run Trigli, my AI customer support product, so I’m not coming at this from the cheap seats. I use the Claude API daily. I’ve felt the difference between this works on my machine and this is safe to put in front of a paying customer. That difference is the whole post.

Give the Build Genuine Credit First

Before any of the criticism is fair, the build deserves real respect.

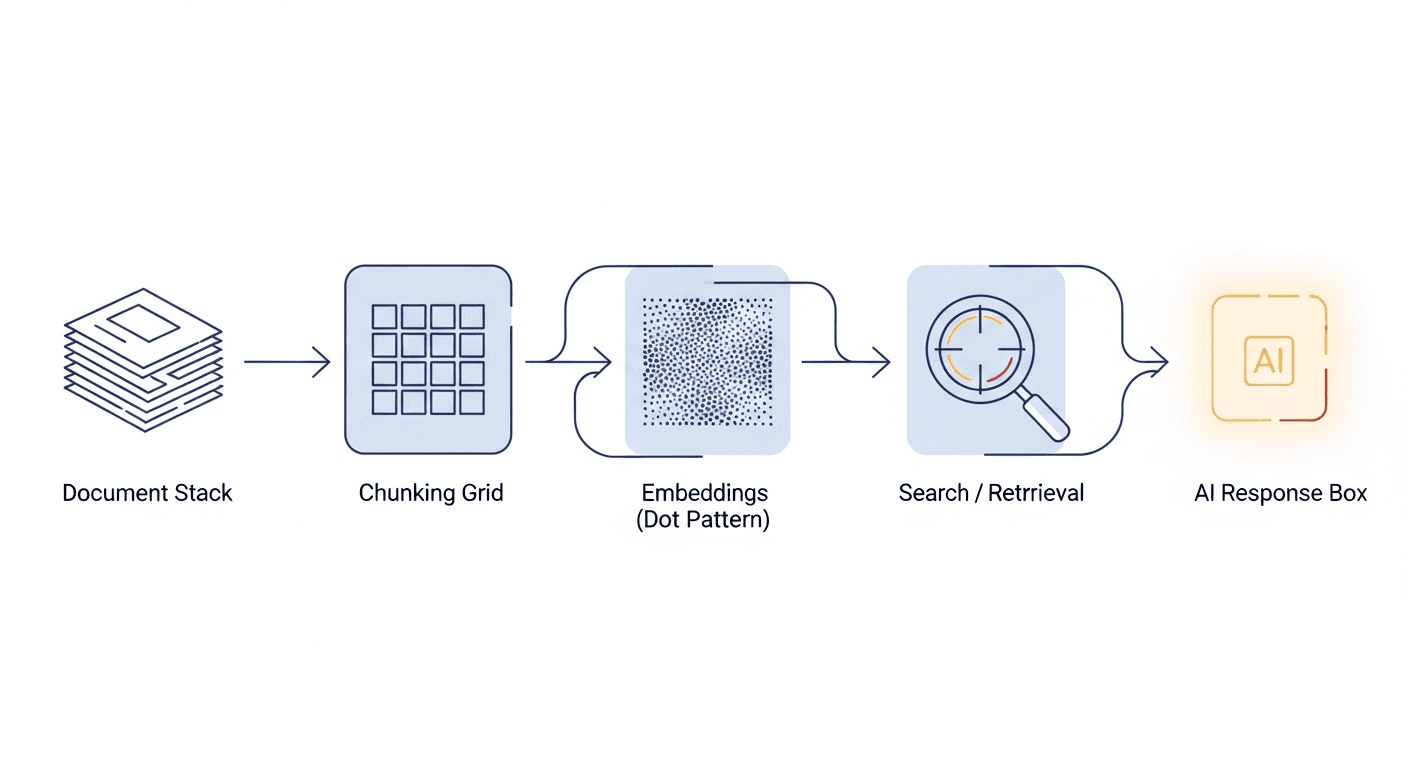

A claude haiku side project at the scale being described, 660,000 pages indexed, working RAG retrieval, single developer, on the cheapest tier of a frontier-quality API, is a real engineering accomplishment. Pretending otherwise to score discourse points is dishonest. Two years ago, that build would have required a small team, a vector-database vendor contract, and a budget conversation. The fact that one person can stand it up on the side now is not nothing. It’s the news.

This is also, to be specific, the kind of thing that gets dismissed as “vibe coding” by people who have never shipped anything. Vibe coding gets you to a working prototype faster than careful engineering does. That’s a real advantage. The criticism worth having is not you shouldn’t have built it. It’s you should have known the difference between this prototype and a tool people rely on. Those are very different conversations.

So credit first. The build is real. The achievement is real. Anything that follows is a discussion of where the bar moves when other people enter the picture.

What the Critics Got Right (Only What They Got Right)

The community surfaced four genuine issues, and they’re worth separating from the mob energy around them. These are the parts of the criticism that hold up.

Haiku is the wrong model tier for high-stakes medical content. Claude Haiku is built for speed and cost, not for catching subtle clinical detail. Sonnet or Opus would catch more, and even those are not a substitute for human-curated medical references. Picking Haiku for a hobby pharmacology lookup is a reasonable cost call. Picking Haiku for content other people will use to think about real medications is not. The reportedly-chosen model tier is a mismatch with the stated use case.

Missing FDA boxed warnings is a real problem, not a nitpick. The example the community surfaced was Clonazepam, a benzodiazepine. Clonazepam carries an FDA boxed warning, the agency’s strongest, covering abuse, addiction, dependence, withdrawal, and the well-documented danger of concurrent use with opioids. The benzodiazepine class warning was updated in September 2020 specifically to make these risks more prominent. If a drug-reference tool surfaces Clonazepam without that warning, it has lost its right to be called a drug-reference tool. That’s not pedantry. The boxed warning is the single most important piece of content on the label.

A disclaimer is not a liability shield. This part of the criticism is legally accurate. “I added a disclaimer that says don’t use this for medical decisions” does not work the way people on the internet think it does. It might shape how a court looks at your intent. It does not create a cone of immunity around content that materially misleads a user.

Professional drug-reference databases exist for a reason. Tools like Epocrates, Micromedex, and Lexidrug (Lexicomp) have rigorous editorial pipelines, daily updates, expert review, and decades of institutional accountability. A vibe-coded RAG does not approximate that, and it does not replace it. When the underlying domain has consequences, the gap between the two categories is the whole game.

These four points hold up. Anyone defending the build should be able to look at all four and say yes, those are real. They are.

What the Critics Got Wrong (or Overblown)

The same thread also surfaced reactions that don’t hold up the same way.

The “take it down NOW” energy is mob-flavored, not advice. It signals tribe, not analysis. There’s a meaningful difference between this needs material fixes before others rely on it and this should not exist, and the thread mostly collapsed both into the second verdict. That’s lazy.

The amateur legal takes also overstate the case. Internet liability hot takes treat every project as if it’s a sold SaaS with paying customers and a clinical decision-support label. The reportedly-described project is a nursing student’s side project, not a sold product or a clinical-grade tool. That distinction matters legally. The risk profile of “I made a thing and put it on the web” is real, but it’s not “you’re going to lose your license and get sued into the ground.” Both extremes get spoken with equal confidence; only one of them is calibrated.

And the discourse mostly under-credited the actual achievement. The same people calling for takedown were happy to ignore that the build is, at the engineering level, impressive. You can hold both. Real accomplishment, real flaws, real fixes needed before this serves anyone but the builder. That’s a calm and accurate position. It also gets less engagement than either pure cheerleading or pure outrage, which is part of why it doesn’t show up much.

So Who Actually Got It Right?

The Build/Ship Distinction Almost Nobody Is Articulating

Here is the lesson the cheerleaders missed and the pile-on buried.

AI tools made BUILDING dramatically easier. Anyone with patience and a credit card can stand up a working RAG against a six-figure-page corpus on a weekend. That is a genuine democratization of the build step, and it deserves to be celebrated as such.

AI tools did not change the bar for SHIPPING something other people will rely on.

Those are two different responsibilities. Build is can I get this working at all. Ship is is this safe to put in front of a user who is going to depend on it. They look similar from the outside. They are not the same job.

When the thing you are building is for YOU, vibe coding is fine. Build whatever. Get it working. Iterate at the speed of your own curiosity. If it breaks, the only person who pays is you. That’s the dream of solo development and AI tools made the dream more accessible than ever. I’m a fan.

When the thing you are building is for OTHERS, particularly in domains where being wrong has consequences, the bar moves. Medical, legal, financial, safety-critical. The model can generate plausible-sounding answers in any of those domains. It cannot tell you which of its plausible-sounding answers is going to harm someone. That responsibility does not transfer to the model. It stays with the person shipping the tool.

That’s the gap. Build is a code problem. Ship is an editorial problem, an architectural problem, and a domain-expertise problem. AI helps enormously with the first. It helps surprisingly little with the second three. Almost every “I built X with AI” story I’ve watched run into trouble has run into trouble at exactly that handoff.

The viral claude haiku side project did not invent this gap. It just made it more visible than usual, because the domain is one where the consequences of getting it wrong are unusually concrete. Medical reference content with missing boxed warnings has a name for what it is. Most domains where the same gap exists are subtler.

How to Tell Which Side of the Line Your Project Is On

A quick practical heuristic, because abstract distinctions are only useful if they help you act.

Ask yourself: if a stranger uses this and the output is wrong, who pays? If the answer is me, I look stupid in my git history, you are on the build side. Vibe code with abandon. The stakes are calibrated to your own embarrassment, which is healthy.

If the answer is the user pays, possibly in time, possibly in money, possibly in something they cannot get back, you are on the ship side. The bar is higher and the work is different. Examples of what ship-grade looks like in domains with consequences:

- Human-curated reference data rather than scraped or generated content. Especially for anything regulated, current, or contested.

- Expert review of the output domain, not just the code. A nurse, lawyer, accountant, or safety engineer reviews what the tool actually surfaces, not just whether the buttons work.

- Architectural humility about what you choose to surface. Sometimes the right answer is for the tool to refuse a query, not generate a confident-sounding partial answer. Knowing what to leave out is a ship-side skill.

- Real disclaimers paired with real architecture. A disclaimer plus careful scoping plus expert review is a defensible package. A disclaimer alone is a fig leaf.

- A way to update. Boxed warnings change. Drug interactions change. Tax law changes. A static dump from your weekend ingest run is out of date the day you publish.

I think about this constantly with Trigli. The product handles real customer support for real businesses, which means if I get this wrong, a paying customer’s customer pays. That changes how I think about everything from prompt design to fallback behavior to what the system is allowed to say without escalation. It’s slower. It’s more expensive. It’s also the actual job.

A Quick Note on the Cost Math People Keep Asking About

| Token type (Claude Haiku 4.5) | Price per 1M tokens |

|---|---|

| Input (uncached) | $1.00 |

| Output | $5.00 |

| Cache write (5 min TTL) | $1.25 |

| Cache read | $0.10 |

A lot of the cheerleading framing focused on cost, which is fair. The economics of a claude haiku side project at this scale are interesting on their own terms, even if you separate them from the question of whether the project should be public.

As of April 2026, Claude Haiku 4.5 pricing is roughly:

- Input tokens: $1.00 per million

- Output tokens: $5.00 per million

- Prompt cache writes (5-minute TTL): $1.25 per million tokens

- Prompt cache reads: $0.10 per million tokens

The number that changes the economics of a build like this is the cache-read rate. Reads cost 10% of base input price. If you have a chunk of context that gets reused across queries, caching it pays for itself after a single read. On any sustained workload, prompt caching is not a feature, it is the budget mechanic.

Worked example. A retrieval pulls 20 chunks at 500 tokens each, plus a system prompt of 1,500 tokens, plus the user’s question at 100 tokens. About 11,600 input tokens per query. Without caching, each query costs about $0.014 in input plus output. With caching on the system prompt and retrieved chunks, the same query at scale settles closer to $0.003. Across a million queries that’s the difference between $14,000 and $3,000.

There is also a quieter trap. Output tokens cost five times more than input tokens at this tier. Generation-heavy workloads, anything with long-form responses, will be dominated by output cost no matter how clever the input handling is. Strategies that help: cap max_tokens, prompt for terse structured output, stream so you can stop early. None of those are flashy. All of them matter.

The math is the same whether the build is for you or for others. The math is also not what determines which side of the build/ship line a project is on. It’s worth keeping those clear.

# Typical Haiku-tier RAG call shape (Python). The cache_control block is

# how you signal "reuse this big chunk on the next call instead of paying

# for it again." That single flag is what flips the math from $14 to $3.

import anthropic

client = anthropic.Anthropic()

response = client.messages.create(

model="claude-haiku-4-5",

max_tokens=400,

system=[

{

"type": "text",

"text": RETRIEVED_DOCS, # ~10,000 input tokens

"cache_control": {"type": "ephemeral"},

}

],

messages=[

{"role": "user", "content": USER_QUERY} # ~1,600 input tokens

],

)# Per-query cost on Claude Haiku 4.5, 11,600 input tokens + 400 output.

# Without caching:

# input: 11,600 / 1,000,000 * $1.00 = $0.0116

# output: 400 / 1,000,000 * $5.00 = $0.0020

# total: $0.0136 / query

# 1,000 queries: $13.60

#

# With prompt caching (10K of input shared and reused, 1 cache write):

# cache write (1x): 10,000 / 1M * $1.25 = $0.0125 (one-time)

# cache read (999x): 10,000 / 1M * $0.10 = $0.0010 / call

# fresh input: 1,600 / 1M * $1.00 = $0.0016 / call

# output: 400 / 1M * $5.00 = $0.0020 / call

# per-call (cached): $0.0046

# 1,000 queries: $0.0125 + (999 * $0.0046) = $4.61

#

# Same workload, ~3x cheaper. The build is the API call. The savings

# are the architecture decision behind it.If this build-versus-ship gap is something you keep running into on your own projects, Chip Huyen’s book AI Engineering: Building Applications with Foundation Models walks through the entire production lifecycle for LLM apps in detail, including evaluation, monitoring, and the validation step our viral side project mostly skipped. It is the closest thing I’ve seen to a real ship-side checklist for this category.

The Real Lesson This Project Made Visible

The viral claude haiku side project was not unprecedented in either direction. Plenty of solo developers have built impressive things with AI assistance. Plenty of solo developers have shipped tools that should not have been shipped without more review. What this one did was put both halves on the same canvas at the same time, which made the build/ship distinction unusually easy to see.

That distinction is the lesson worth carrying out of this whole news cycle. AI tools are an extraordinary build accelerant. They do not change the work of shipping. The two things look similar at small scales and diverge at scale, and the divergence is where almost every project gets into trouble.

If you are building for yourself, ignore most of this and have fun. The bar for the second case is not the bar for the first one, and pretending it is would just gatekeep an enormously productive era of solo development. But if you are putting something in front of others, especially in any domain where being wrong has consequences, the build is the easy part. The shipping is the job. Take it that seriously and the rest sorts itself out. (Part of that seriousness, if you’re running autonomous agents, is thinking hard about how much blast radius your agent actually has before something breaks the wrong way.)

Sources

- r/ClaudeAI: I’m a nursing student who built a 660K-page database (original Reddit thread that prompted this analysis; direct fetch was blocked, all thread-specific specifics in this post are framed as community-surfaced or reportedly described)

- FDA: Updated Boxed Warning to improve safe use of benzodiazepine drug class (verifies the Clonazepam boxed-warning point)

- Anthropic: Introducing Claude Haiku 4.5 (official pricing announcement)

- Anthropic Claude API Docs: Prompt caching (cache write/read pricing and TTL options)

- How I Use Claude Code to Run This Entire Blog (companion post on real Claude usage at smaller scale)

- I Built an AI That Handles Customer Support So Small Businesses Don’t Have To (background on Trigli, the SaaS Tommy uses Claude API for daily)

Your Turn

Where have you seen the build/ship gap show up in your own AI projects? The place I see it most often is right after a working prototype, when the next decision is whether to put it in front of someone who will rely on it. That’s the point most people I know have either thought hard or hit trouble. If you’ve shipped something through that handoff, what changed for you between build and ship? Drop a comment. The middle of that conversation is where most of the useful stuff lives. (If you’re also trying to figure out whether any of this can become a real income source, I looked at that directly in a separate post on the AI side hustle reality check. And if you run Claude Code daily, I wrote about how Claude Code handles long sessions from the operator side. The same build-vs-ship question shows up again when Anthropic offers to take the agent harness off your hands; my honest take on Claude Managed Agents walks through which of the new features I’d adopt and which lock-in I’d refuse.)

Pingback: AI Side Hustle via Automation: What Actually Works in 2026